Difference between revisions of "Mandelbrot set"

(→Drawing algorithms) |

(→Basic algorithm) |

||

| Line 44: | Line 44: | ||

complex c = p.affix | complex c = p.affix | ||

complex z = 0 | complex z = 0 | ||

| − | color = | + | color = black # this color will be assigned to p at the end, black is a temporary value |

for n in range(0,N): | for n in range(0,N): | ||

if squared_modulus(z)>16: | if squared_modulus(z)>16: | ||

| − | color = | + | color = white |

break: # this will break the innermost for loop and jump just after | break: # this will break the innermost for loop and jump just after | ||

z = z*z+c | z = z*z+c | ||

Revision as of 17:54, 19 December 2015

Here we will give a few algorithms and show the corresponding results.

Contents

The Mandelbrot set

Certainly, Wikipedia's page about this set in any language should be a very good introduction to this set. Here I give a very quick definition/reminder:

The Mandelbrot set, denoted M, is the set of complex numbers $c$ such that the critical point $z=0$ of the polynomial $P(z)=z^2+c$ has an orbit that is not attracted to infinity. If you do not know any of the italicized words, go and look on the Internet.

It is interesting for two reasons:

- The Julia set of $P$ is connected if and only if $c\in M$.

- The dynamical system $z\mapsto P(z)$ is stable under a perturbation of $P$ if and only if $c\in \partial M$, where $\partial $ is a notation for the topological boundary.

Drawing algorithms

All the algorithms I will present here are scanline methods.

Basic algorithm

The most basic is the following, it is based on the following theorem:

Theorem: The orbit 0 tends to infinity if and only if at some point it has modulus >4.

This theorem is specific to $z\mapsto z^2+c$, but can be adapted to other families of polynomials by changing the threshold $4$ to another one. Here the threshold does not depend $c$ but in other families it may.

Now here is the algorithm:

Choose a maximal iteration number N

For each pixel p of the image:

Let c be the complex number represented by p

Let z be a complex variable

Set z to 0

Do the following N times:

If |z|>4 then color the pixel white, end this loop prematurely, go to the next pixel

Otherwise replace z by z*z+c

When the loop above reached its natural end: color the pixel p in black

Go to the next pixel

I am not going to use the syntax above again, since it is too detailed. Let us see what it gives in a Python-like style:

for p in allpixels:

complex c = p.affix

complex z = 0

color = black # this color will be assigned to p at the end, black is a temporary value

for n in range(0,N):

if squared_modulus(z)>16:

color = white

break: # this will break the innermost for loop and jump just after

z = z*z+c

p.color = color # so it will be white unless we ran into the line color=black

Here in a C++ like style: (std::complex<double> simplified into complex)

for(int j=0; j<height; j++) {

for(int i=0; i<width; i++) {

complex c = some formula of i and j;

complex z = 0.;

for(int n=0; n<N; n++) {

if(squared_modulus(z)>16) {

image[i][j]=black;

goto label;

}

z = z*z+c;

}

image[i][j]=white;

label: {}

}

}

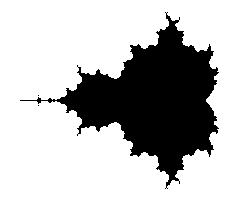

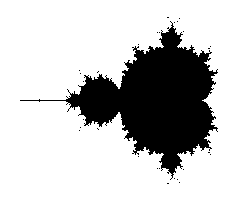

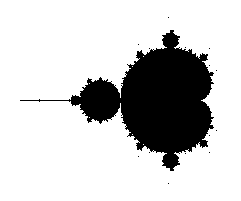

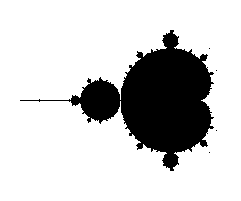

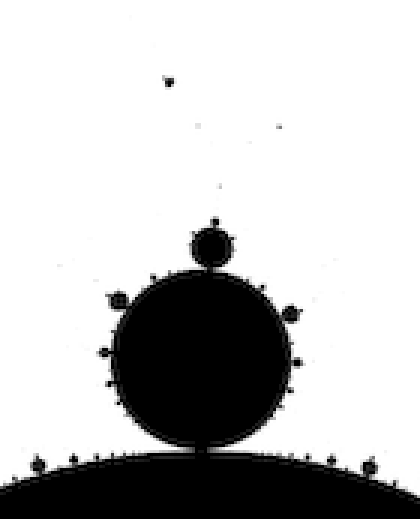

Let us now look at the kind of pictures we get with that. We an image size of 241x201 pixels, with a mathematical width of 3.0 and so that the center pixel has affix -0.75. The only varying parameter between them is the maximal number of iterations N.

All images except the last one took a fraction of a second to compute on a modern laptop. Back then in the 1980's it was different.

Ideally the maximal number of iterations N should be infinity, but then the computation would never stop. The idea is then that the bigger N is, the more accurate the picture should be.

The N=one million image took 45s to compute on the same laptop, which is pretty long given today's computers power. What happens? Every black pixel requires $10^6$ iterations, and since there are 9 771 of them, we do at least ~$10^10$ times the computation z*z+c. To accelerate this, one should find a way to detect that we are in M so that we use less than the maximal number of iterations.

But that is not the only problem with increasing N. Not only it took much time, but the difference with N=100 is pretty small. Worse: it seems that by increasing N we are losing parts of the picture. What happens is that we are testing pixel centers or corners, and there are strands of M that are thin and wiggle between the pixel centers, so that as soon as N is big enough, the pixel gets colored white.

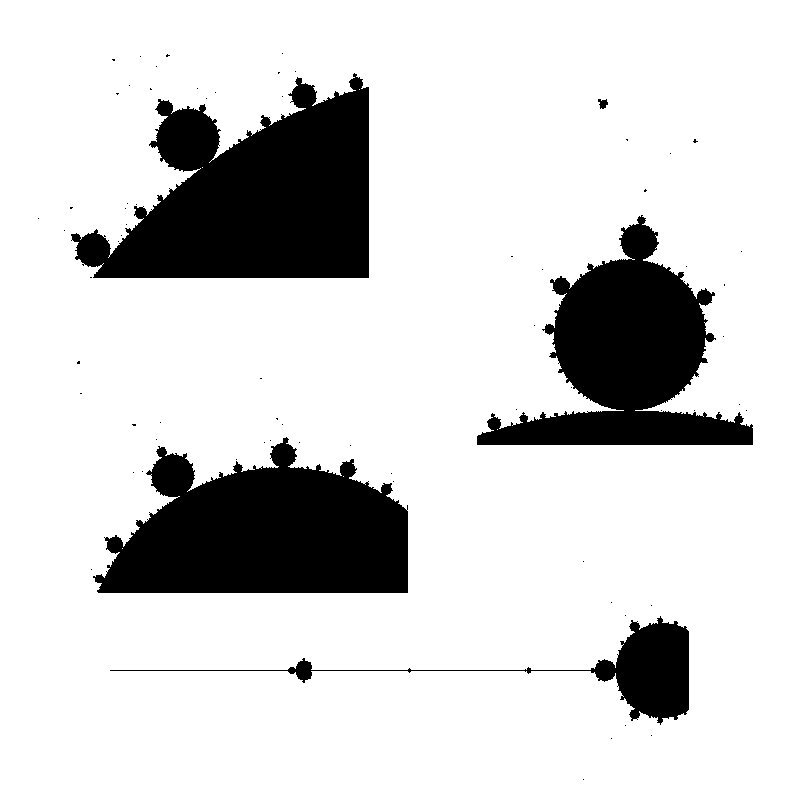

Here are enlarged versions of parts of these images, so that pixels are visible.

Let us look at a parts of a higher resolution image, computed with a big value of N: notice the small islands. The set M is connected in fact, but the strands connecting the different black parts are invisible, except some horizontal line that appears because, by coincidence, we are testing there complex numbers that belong to the real line: $M\cap \mathbb{R} = [-2,0.5]$.

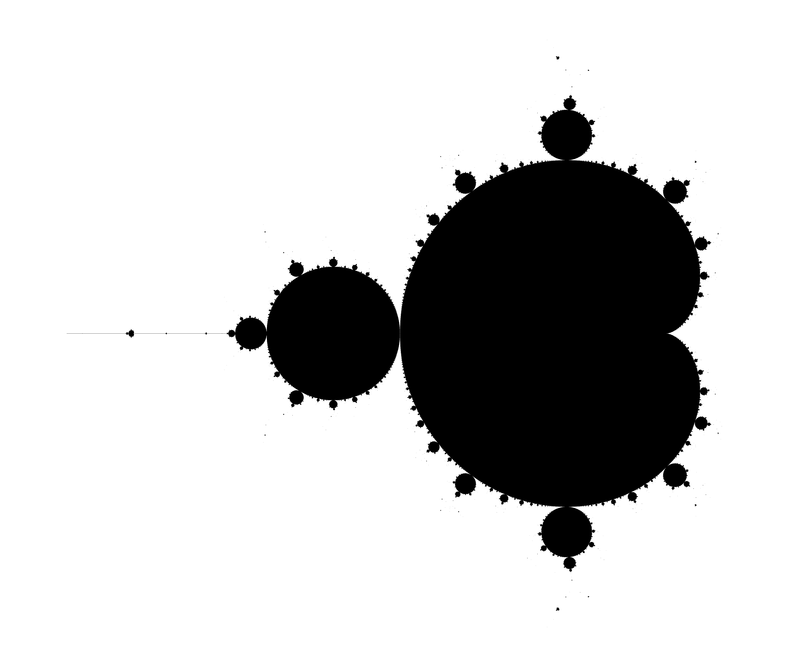

The next image has been computed using a 4801x4001 px image and then downscaling by a factor of 5 (in both directions) to get an antialiased grayscale image. It also has been cropped down a little bit, to fit in this column without further rescaling.

Last, an enlargment to see the pixels of the above image: